Thursday, December 7, 2017

Blockchains, Bubbles, and You

Back In The Saddle Again

Monday, August 11, 2014

AFoD Blog Interview With Andrew Case

Andrew Case’s Professional Biography

Andrew is a digital forensics researcher, developer, and trainer. He has conducted numerous large scale digital investigations and has performed incident response and malware analysis across enterprises and industries. Prior to focusing on forensics and incident response, Andrew's previous experience included penetration tests, source code audits, and binary analysis. He is a co-author of “The Art of Memory Forensics”, a book being published in summer 2014 that covers memory forensics across Windows, Mac, and Linux. Andrew is the co-developer of Registry Decoder, a National Institute of Justice funded forensics application, as well as a core developer of The Volatility Framework. He has delivered trainings to a number of private and public organizations as well as at industry conferences. Andrew's primary research focus is physical memory analysis, and he has presented his research at conferences including Blackhat, RSA, SOURCE, BSides, OMFW, GFirst, and DFRWS. In 2013, Andrew was voted “Digital Forensics Examiner of the Year” by his peers within the forensics community.

AFoD Blog: What was your path into the digital forensics field?

Andrew Case: My path into the digital forensics field started from a deep interest in computer security and operating system design. While in high school I realized that I should be doing more with computers than just playing video games, chatting, and doing homework. This led me to starting to teach myself to program, and eventually taking Visual Basic 6 and C++ elective classes in my junior and senior years. Towards the end of high school I had become pretty obsessive with programming and computer security, and I knew that wanted to do computer science in college.

I applied to and was accepted into the computer science program at Tulane University in New Orleans (my hometown), but Hurricane Katrina had other plans for me. On the way to new student orientation in August 2005, I received a call that it was cancelled due to Katrina coming. That would be the closest I ever came to being an actual Tulane student, which obviously put a dent in my college plans.

The one bright side to Katrina was that from August 2005 to finally starting college in January 2006, I had almost six months of free time to really indulge my computer obsession. I spent nearly every day and night learning to program better, reading security texts (Phrack, Uninformed, Blackhat/Defcon archives, 29A, old mailing list posts, related books, etc.), and deep diving into operating system design and development. My copy of Volume 3 of the Intel Architecture manuals that I read cover-to-cover during this time is my favorite keepsake of Katrina.

This six month binge led to two areas of interest for me - exploit development and operating system design. Due to these interests, I spent a lot of time learning reverse engineering and performing deep analysis of runtime behavior of programs. I also developed a 32-bit Intel hobby OS along the way that, while not overly impressive compared to others, routed interrupts through the APIC, supported notions of userland processes, and had very basic PCI support.

By the time January 2006 arrived, I was expecting to finally start college at Tulane. These plans came to a quick halt though as in the first week of January, two weeks before classes were to start, Tulane dropped all of their science and engineering programs. Due to the short notice Tulane gave, I had little time to pick a school or risk falling behind another semester. The idea of finding a school outside of the city, moving to it, and starting classes all in about ten days seemed pretty daunting. I then decided to take a semester at the University of New Orleans. This turned out to be a very smart decision as UNO’s computer science program was not only very highly rated, but it also had a security and forensics track inside of CS.

For my first two years of college I was solely focused on security. At the time forensics seemed a bit… dull. My security interest led to interesting opportunities, such as being able to work at Neohapsis in Chicago for two summers doing penetration tests and source code audits, but I eventually caught the forensics bug. This was greatly influenced by the fact that professors at UNO, such as Dr. Golden Richard and Dr. Vassil Roussev, and students, such as Vico Marziale, were doing very interesting work and publishing research in the digital forensics space. My final push into the forensics came when I took Dr. Golden’s undergraduate computer forensics course. This class throws you deep into the weeds and forces you to analyze raw evidence starting with a hex editor. You eventually move onto more user-friendly tools and automated processing. Once I saw the power of forensic analysis I was hooked, and my previous security and operating systems knowledge certainly helped ease the learning curve.

Memory forensics was also become a hot research area at the time, and when the 2008 DFRWS challenge came out it seemed time for me to fully switch my focus to forensics. The 2008 challenge was a Linux memory sample that needed to be analyzed in order to answer questions about an incident. At the time, existing tools only supported Windows so new research had to be performed. Due to my previous operating systems internals interest, I had already studied most of the Linux kernel so it seemed pretty straightforward to extract data structures I already understood from a memory sample. My work on this, in conjunction with the other previously mentioned UNO people, led to the creation of a memory forensics tool, ramparser, and the publication of our FACE paper at DFRWS 2008. This also led to my interest in Volatility and my eventual contributions of Linux support to the project.

AFoD: What was the memory forensics tool you created?

Case: The tool was called ramparser, and it was designed to analyze memory samples from Linux systems. It was created as a result of the previously mentioned DFRWS 2008 challenge. A detailed description of the tool and our combined FACE research can be found here: http://dfrws.org/2008/proceedings/p65-case.pdf. This project was my first real research into memory forensics, and I initially had much loftier goals than would ever be realized. Some of these goals would later be implemented inside Volatility, while some of them, such as kernel-version generic support, still haven’t been done by myself or anyone else in the research community.

Soon after the DFRWS 2008 paper was published, the original ramparser was scrapped due to severe design limitations. First, it was written in C, which made it nearly impossible to implement the generic, runtime data structures that are required to support a wide range of kernel versions. Also, ramparser had no notion of what Volatility calls profiles. Profiles in Volatility allow for plugins to be written generically while the backend code handles all the changes between different versions of an operating system (e.g. Windows XP, Vista, 7, and 8). Since ramparser didn’t have profiles, the plugins had to perform conditional checks for each kernel version. This made development quite painful.

ramparser2 (I am quite creative with names) was a rewrite of the original ramparser in Python. The switch to a higher-level interpreted language meant that much of the misery of C immediately went away. Most importantly, dynamic data structures could be used that would adapt at runtime to the kernel version of the memory sample being analyzed. I ported all of the original ramparser plugins into the Python version and added several new ones.

After this work was complete, I realized that, while my project was interesting, I had no real way of getting other people to use or contribute to it. I also knew that Windows systems were of much higher interest to forensics practitioners than Linux systems and that Volatility, which only supported Windows at the time, was beginning to see wide spread use in research projects, incident handling, and malware analysis. I then decided that integrating my work into Volatility would be the best way for my research to actually be used and improved upon by other people. Looking back on that decision now I can definitely say that I made the right choice.

AFoD: For those readers who are not familiar with digital forensics or at least not familiar with memory forensics, can you explain what the Volatility Project is and how you became involved with it?

Case: The Volatility Project was started in the mid-2000s by AAron Walters and Nick Petroni. Volatility emerged from two earlier projects by Nick and AAron, Volatools and The FATkit. These were some of the first public projects to integrate memory forensics into the digital investigation process. Volatility was created as the open source version of these research efforts and was initially worked on by AAron and Brendan Dolan-Gavitt. Since then, Volatility has been contributed to by a number of people, and has become one of the most popular and widely used tools within the digital forensics, incident response, and malware analysis communities.

Volatility was designed to allow researchers to easily integrate their work into a standard framework and to feed off each other’s progress. All analysis is done through plugins and the core of the framework was designed to support a wide variety of capture formats and hardware architectures. As of the 2.4 release (summer 2014), Volatility has support for analyzing memory captures from 32 and 64-bit Windows XP through 8, including the server versions, Linux 2.6.11 (circa 2005) to 3.16, all Android versions, and Mac Leopard through Mavericks.

The ability to easily plug my existing Linux memory forensics research into Volatility was one of the main points that led me to more deeply explore the project. After speaking with Brendan about some of my research and the apparent dead-end that was my own project, he suggested I join the Volatility IRC channel and get to know the other developers. Through the IRC channel I met Jamie, Michael, AAron, and other people that I now work with on a daily basis. This also got me in touch with Michael Auty, who is the Volatility maintainer, and who worked with me for hours a day for several weeks in order to get the base of the Linux support. Once this base support was added I could then trivially port my existing research into Volatility Linux plugins.

AFoD: I know we have people who read the blog who aren't day-to-day digital forensics people so can you tell us what memory forensics is and why it's become such a hot topic in the digital forensics field?

Case: Memory forensics is the examination of physical memory (RAM) to support digital forensics, incident response, and malware analysis. It is has the advantage over other types of forensics, such as network and disk, in that much of the system state relevant to investigations only appears in memory. This can include artifacts such as running processes, active network connections, and loaded kernel drivers. There are also artifacts related to the use of applications (chat, email, browsers, command shells, etc.) that only appear in memory and are lost when the system is powered down. Furthermore, attackers are well aware that many investigators still do not perform memory forensics and that most AV/HIPS systems don’t thoroughly look in memory (if at all). This has led to development of malware, exploits, and attack toolkits that operate solely in memory. Obviously these will be completely missed if memory is not examined. Memory forensics is also being heavily pushed due to its resilience to malware that can easily fool live tools on the system, but have a much harder time hiding within all of RAM.

Besides the aforementioned items, memory forensics is also becoming heavily used due to its ability to support efficient triage at scale and the short time in which analysis can begin once indicators have been found. Traditional triage required reading potentially hundreds of MBs of data across disk looking for indicators in event logs, the registry, program files, LNK files, etc. This could become too time consuming with even a handful of machines, much less hundreds or thousands across an enterprise. On other hand, memory-based indicators, such as the names of processes, DLLs, services, and kernel drivers, can be checked by only querying a few MBs of memory. Tools, such as F-Response, makes this fairly trivial to accomplish across huge enterprise environments and also allow for full acquisition of memory if indicators are found on a particular system.

The last reason I will discuss related to the explosive growth of the use of memory forensics is the ability to recover encryption keys and plaintext versions of encrypted files. Whenever software encryption is used, the keying material must be stored in volatile memory in order to support decryption and encryption operations. Through recovery of the encryption key and/or password, the entire store (disk, container, etc.) can be opened. This has been successfully used many times against products such as TrueCrypt, Apple’s Keychain, and other popularly used encryption products. Furthermore, as files and data from those stores are read into memory they are decrypted so that the requesting application (Word, Adobe, Notepad) can allow for viewing and editing by the end user. Through recovery of these file caches, the decrypted versions of files can be directly reconstructed from memory.

AFoD: The rumor going around town is that you're involved with some sort of memory forensics book? Is there any truth to that?

Case: That rumor is true! Along with the other core Volatility developers (Michael Ligh, Jamie Levy, and AAron Walters), we have recently written a book: The Art of Memory Forensics: Detecting Malware and Threats in Windows, Linux, and Mac Memory. The book is over 900 pages and provides extensive coverage of memory forensics and malware analysis across Windows, Linux, and Mac. While it may seem like it covers a lot of material, we originally had 1100 pages of content before the editor asked us to reduce the page count. The full table of contents for the book can be found here.

The purpose of this book was to document our collective memory forensics knowledge and experiences, including the relevant internals of each operating system. It also demonstrates how memory forensics can be applied to investigations ranging from analyzing end-user activity (insider threat, corporate investigations) to uncovering the workings of the most advanced threat groups. The book also spends a good bit of time introducing the concepts that are needed to fully understand memory forensics. There is an entire chapter dedicated to memory acquisition – a deeply misunderstood topic that can have drastic effects on people’s subsequent ability to perform proper memory analysis. An added bonus of this chapter is that we worked with the authors of the leading acquisition tools to ensure that our representation of the tools were correct and that we accurately described the range of issues that investigators need to be aware of when performing acquisitions.

The book is structured so that we introduce a topic (processes, kernel drivers, memory allocations, etc.) for a specific operating system, explain the relevant data structures, and then show how Volatility can be used to recover the information in an automated fashion. Volatility was chosen due to our obvious familiarity with it, but also due to the fact that it is the only tool capable of going so deeply and broadly into memory. The open source nature of Volatility means that readers of the book can read the source code of any plugins of interest, modify them to meet the needs of their specific environment, and add on to existing capabilities in order to expand the field of memory forensics. With that said, the knowledge gained from the book is applicable to people using any memory forensics tool and/or those who wish to develop capabilities outside of Volatility.

Along with the book, we will also be releasing the latest version of Volatility. This 2.4 release includes full support for Windows 8, 8.1, Server 2012, and Server 2012 R2, TrueCrypt key and password recovery modules, as well as over 30 new Mac and Linux plugins for investigating malicious code, rootkits, and suspicious user activity. In total, Volatility 2.4 has over 200 analysis plugins across the supported operating systems.

AFoD: What do you recommend to people who are looking to break into the digital forensics field? What would you tell someone who is in high school compared to someone who is already in the middle of a career and looking to make the switch.

Case: To start, there are a few things I would tell both sets of people. First, I consider learning programming to be the most important and essential. The ability to program removes you from the set of investigators that are limited by what their tools can do for them, and the skill also makes you highly attractive to potential employers. As you know, when dealing with advanced malware and attackers, existing tools are only the starting point and many customizations and “deep dives” of data outside tools’ existing functionality are needed to fully understand what occurred. To learn programming, I would recommend starting with a scripting language (Python, Ruby, or similar). These are the easiest to learn and program in and the languages are pretty forgiving. There are also freely accessible guides online as well as great books on all of these languages.

The other skill I would consider essential is at least a moderate understanding of networking. I don't believe that people can be fully effective host analysts without some understanding of networking and how data flows through the environment they are trying to protect or investigate. If the person wants to become a network forensic analyst, then they obviously need the base set of skills to even be considered. To learn the basics of networking, I would recommend by starting reading a well-rated Network+ study book. This will teach you about routing, switching, physical interfaces, sub-nettings, VLANS, etc. After understanding the hardware devices and how they interact, you should then read Volumes 1 of TCP/IP illustrated. If you can read C, I would recommend reading Volume 2 as well, but know that the book can be brutal. It is over 1000 pages and walks you literally line-by-line through the BSD networking stack. You will be a master if you can finish and understand it all. It took me a month of reading everyday after work to get through it during a summer in college. If someone hasn't read TCP/IP illustrated then I seriously question his or her networking background. To quote a document that I find very inspirational related to security and forensics: "have you read all 3 volumes of the glorious TCP/IP Illustrated, or can you just mumble some useless crap about a 3-way handshake".

As far as specific advices to the different audiences, I would strongly recommend that high school students learn at least some electronics and hardware skills. If you are going to do computer science make sure to take some electrical engineering courses as electives in order to get hands-on experience with electronics. I plan on expanding on this more in the near future, but I truly think that in the next few years not being able to work with hardware will limit one’s career choices and will certainly affect your ability to do research. In short, current investigators can get away with only interacting with hardware when performing tasks like removing a hard drive or disassembling components by hand. As phones, tablets, and other devices without traditional hard drives or memory become standard (see “Internet of Things”), the ability to perform actions, such as removing flash chips, inspecting hardware configurations, and interacting with systems through hardware interfaces will become common place. Without these skills you won’t even be able to image a hard drive - for example if I gave an investigator with the currently most useful skills a “smart TV” and told he or she to remove the hard drive in a forensically sound manner, do you think it would happen? Would the person grab an electronics kits and start pulling electrical components out? Most people in forensics would have no idea how to do that - myself included.

For people already in the field, I would play to your strengths. If you have a background in programming then use that to your advantage. Explain to your future employer how your programming background will allow you to automate tasks and help out in cases where source code review is needed. Being able to automate tasks is a huge plus and greatly increases efficiency while removing the chance for human error. If a person’s background is networking, then there are many ways he or she could transition into network forensics roles, whether as part of a SOC or a consultant. When transitioning roles I would make sure to ask any prospective employers about training opportunities at the company. If a person with an IT background can really get into the forensics/IR trenches while also getting quality training once or twice a year then he or she will quickly catch up to their peers.

AFoD: So where can people find you this year? Will you be doing any presentations or attending any conferences?

Case: The remainder of the year will actually be quite busy with speaking engagements. Black Hat just wrapped up and while there we did a book signing, released Volatility 2.4 at Black Hat Arsenal, and threw a party with the Hacker Academy to celebrate the book’s release. In September I will be speaking at Archc0n in St. Louis, and in October I will be taking my first trip to Canada to speak at SecTor. I may also be speaking at Hacker Halted in October. In November I will be speaking at the Open Memory Forensics Workshop (OMFW) and the Open Source Digital Forensics Conference (OSDFC) along with the rest of the Volatility team. I also have pending CFP submissions to BSides Dallas and the API Cyber Security Summit, both in November. I am currently eyeing some conferences for early next year including Shmoocon and SOURCE Boston, neither of which I have spoke at previously. Finally, if any forensics/security people are ever coming through New Orleans then they should definitely reach out. Myself, along with several other local DFIR people, can definitely show out-of-towners a good time in the city and have done so many times.

Wednesday, April 2, 2014

The State Of The Blog

I get enough people asking me about the fate of the blog where I thought it would make more sense to just crank out a blog post. I’m still here, but my time continues to be so limited that I’ve had to continue to put the blog on hold. A couple years back I started a great new job building out a world class cyber investigations team and that continues to take up the majority of my time. I’m planning a few blog posts about what I have learned as a hiring manager to help those who are looking to break into the field and how best to approach things like resumes, cover letters, and interviews.

What really killed my ability to stay on top of the blog was starting an MBA program last fall. It turns out a full-time job coupled with being a full-time MBA student doesn’t leave much free time. Pretty much anything that doesn’t involve my family, job, or school will be on hold until I graduate in the spring of 2015 or the University of Florida punts me out of their MBA program. I’ve managed to survive one term so far and I’m cramming for finals for my second term this week.Three more terms to go after that.

I’m hoping to carve out some time to crank out a couple blog posts now and again before graduation and then get back to my usually blogging schedule of a blog post every two to four weeks. I have a couple blog posts that I really want to get out this year including some interviews.

Monday, September 23, 2013

Ever Get The Feeling You’ve Been Cheated? (Part 2)

I’m back in graduate school these days which is one of the reasons why I’m long overdue on this blog post. Returning to school has provided me with perspective of a student when thinking about the issue of digital forensics degrees. The more I think about it, the less I like the idea of the digital forensics academic programs compared to some alternatives.

The last blog post resulted in plentiful public and private feedback. A common question was what I expected from the graduate of digital forensics programs. I don’t expect someone with a digital forensics degree and no experience to “hit the ground running” where they are immediately cranking out competent digital forensics exams. What I do expect from undergraduate students is that they will be able to perform basic digital forensics exams with about six months of substantial training from my team. I also expect that they will be able to talk intelligently about file system forensics in the initial job interview. If a candidate doesn’t know digital forensics beyond the tools, they were cheated and they’re yet another digital forensics degree victim. I might as well just draw a chalk outline around the chair they sat in for the interview because it’s a crime scene.

If a candidate has a graduate degree in digital forensics, I have the same six month expectation of when they can start to perform acceptable digital forensics exams. Additionally, they had better be able to keep up in an advanced NTFS discussion during the interview. I won't go into the specifics here because I don't want to give away my hiring methods and questions, but I expect a working knowledge of NTFS from the undergraduate degree holders and much more out of the people with a graduate degree. If you have that shiny new digital forensics graduate degree, you also better have something you are passionate about and skilled at when it comes to the digital forensics world.

So how do you get to the place where you can be successful in a job interview and land that first job? In general, forget about getting a digital forensics degree at an undergraduate level. You’re better off building a firm intellectual foundation for yourself by mastering the fundamentals of computer hardware and software by going through a program such as computer engineering, electrical engineering, or a similarly structured information technology program. Most digital forensics programs are just warmed over mediocre information technology programs with enough poorly taught digital forensics content so that the school can call it a digital forensics degree.

If you want to be excellent at digital forensics, you need a strong understanding of the fundamentals of the technology that you are going to be investigating. The medical profession figured this out a long time ago when it came to training doctors. Medical school is about teaching students about the fundamentals before they move onto their more specialized job roles. Specialties such radiology and pathology are specializations in the medical world that are roughly similar to what we do in the technical world. Both of those jobs require a rigorous general education in medical school before more highly specialized training through residency and fellowship educational processes.

If someone in high school were to come to me today and ask me what the best way to prepare for a digital forensics career, I would tell them to find the best value they can in a degree such as computer or electrical engineering and to supplement that education with some specialized digital forensics training. The specialized training could take the form of a strong digital forensics undergraduate minor, a graduate or undergraduate certificate program, or a full digital forensics graduate program. Some of the best programs in the digital forensics world aren’t actually full digital forensics programs. You do not have to get an degree in digital forensics to prepare for and begin a rewarding career in the field.

Value is important when it comes education which is why I caution students about taking on excessive student loans. Racking up $80,000 in loans for a mediocre digital forensics degree is senseless. I can understand higher student loans if someone is fortunate enough to get into certain top-tier schools such as Cal Tech, MIT, or Stanford, but the math just isn’t likely to work for an expensive degree in digital forensics from Burning Stump Junction University (BSJU). If you are here in the United States, you likely have very fine options that are being offered in your state schools at in-state tuition prices. You will likely be much better off getting that computer engineering degree from the University of Your State at in-state tuition prices than going into massive debt for digital forensics degree at BSJU.

Sunday, July 7, 2013

Gary “Doc” Welt (You Can Help Batman)

In the way of warning, this blog post has almost nothing to do with digital forensics and everything to do with something more important. One of the nice things about having my own blog is that I am my own editor and I don’t have to ask permission to write about something that has very little to do with the original purpose of the blog.

I originally set out to write a follow-up to my last post dealing with the deficiencies that I’m seeing in digital forensics education. That blog post generated quite a bit of interest and I’m grateful for all of the responses both in public and private. I’ll get back to that topic in my next blog post and, as an added “bonus”, I’ll even talk about the new CCFP “cyber forensics” certification being offered by ISC2.

But none of that seems all that important to me as I write this on the 4th of July weekend given how many people over the years have sacrificed everything they had to defend the United States of America and the rest of Western Civilization against a whole host of profoundly bad people. Even a cursory glance at world history shows that peace and prosperity is not the natural state of human affairs. Being able to sustain a place like the United States requires an incredible amount of continuous effort by many people with the brunt of the burden falling on the United States military.

By day, I am a mild mannered digital forensics geek who has the honor and privilege to lead a pack of world-class border collies. By night (and sometimes weekends), among other things, I’m a rookie competitive action shooter. I started doing this early this year and it’s been an amazing experience in large part because of the people involved in the sport. They tend to be some of the nicest and most generous people that I've encountered in many years and this generosity reminds me of the digital forensics community in many ways. My primary game is USPSA action shooting and my home club is the Wyoming Antelope Club in Clearwater, Florida.

It’s through the Wyoming Antelope Club that I became aware of a real live superhero by the name of Gary “Doc” Welt. “Doc” Welt spent around thirty years of his life as a United States Navy SEAL. You can read about Gary’s career here and you will also read why I’m writing this. Gary Welt has been diagnosed with Amyotrophic Lateral Sclerosis (ALS) which also known as Lou Gehrig’s disease. ALS is a very tough set of cards to be dealt. Gary provides a very clear explanation of what he’s up against in this YouTube video. The life expectancy of someone diagnosed with it tends to be two to five years. There is a small percentage of people who live beyond that time. This is the same disease that Stephen Hawking has and, as CNN explains...

Most people with ALS survive only two to five years after diagnosis. Hawking, on the other hand, has lived more than 40 years since he learned he had the disease, which is also known as Lou Gehrig's Disease in America and motor neuron disease, or MND, in the United Kingdom.

So if anyone has a shot at beating the odds in the face of ALS, it’s a superhero like Gary Welt. What is interesting is that Gary’s military service might be one of the things that increased his risk for getting ALS. The Mayo Clinic reports that:

Recent studies indicate that people who have served in the military are at higher risk of ALS. Exactly what about military service may trigger the development of ALS is uncertain, but it may include exposure to certain metals or chemicals, traumatic injuries, viral infections and intense exertion.

I call Gary Welt a superhero because he is one. Think about it. No one would deny that Batman is a superhero, but he’s a superhero who doesn’t have any intrinsic superpowers. He wasn’t bitten by a radioactive spider or exposed to gamma radiation which provided him special powers. He wasn’t born on Krypton and sent to Earth. Batman is superhero because he's an exceptionally trained, highly intelligent, and supremely well-conditioned human being with a vast equipment budget. That also describes the US Navy SEALS. Most people can’t even get into their training pipeline much less complete it because of the mental and physical toughness that is required. They do incredibly complicated and challenging work with some of the most sophisticated weapons systems in the world. So even if you are mentally and physically tough enough, you aren’t going to become a SEAL if you are a dullard.

What about equipment? We all know that Batman has all sorts fantastic equipment like the Batmobile, Batcopter, Batcycle, Batboat, and all the rest of his goodies. The SEALS have their own stuff that might as well be right out of a comic book. Check out the picture below.

That’s right. The SEALS have their own version of the Batsub. They just call it a SEAL Delivery Vehicle. Put some capes on those guys and give it a bit more of a snappy name and you’ve got a picture right out of a comic book.

Not enough to convince you? Fine. The SEALS have their own version of the BatBuggy. Look at this:

The SEALS just happen to call their BatBuggy a Desert Patrol Vehicle. Not the most creative name, but it can be equipped with a variety of weapons including a 40mm grenade launcher so it doesn’t need one. Good luck with that, Joker.

The only meaningful difference that I can see between a superhero like Batman and a superhero like Gary Welt is that Batman is fictional and “Doc” Welt and the rest of his SEAL brothers are real. “Doc” Welt is a superhero who has devoted his life to fighting bad guys and protecting the rest of his. Now we have an opportunity to try and return the favor by helping him out when he’s in a tough fight. How often do you get to say that you helped a real life superhero?

As the Red Circle Foundation webpage set for for him explains:

We are raising money to help Gary and his wife modify their home for his condition and for wheelchair access. The VA (Veterans Affairs) does a lot of good, but they are a slow moving bureaucracy and time is critical for the Welt family.

The primary way that you can help Gary is donating money via the Red Circle Foundation website. I think the current setup is that any money you give via that portal will result in 90 percent going to Gary and 10 percent to help pay for Red Circle Foundation costs. If you follow Gary’s progress at the HelpGaryWelt Facebook page you’ll see them discussing how that works.

I know the digital forensics community to be a very generous bunch with a culture of sharing and helping one another out. He’s an opportunity for us stand together to help someone who has done so much for others. How often can you say that you got the help Batman? Please consider giving anything you can to help a real live superhero like Gary “Doc” Welt.

Photo Credits and Captions

Atlantic Ocean (May 5, 2005) - Members of SEAL Delivery Vehicle Team Two (SDVT-2) prepare to launch one of the team's SEAL Delivery Vehicles (SDV) from the back of the Los Angeles-class attack submarine USS Philadelphia (SSN 690) on a training exercise. The SDVs are used to carry Navy SEALs from a submerged submarine to enemy targets while staying underwater and undetected. SDVT-2 is stationed at Naval Amphibious Base Little Creek, Va., and conducts operations throughout the Atlantic, Southern, and European command areas of responsibility. U.S. Navy photo by Chief Photographer's Mate Andrew McKaskle (RELEASED)

Camp Doha, Kuwait (Feb. 13, 2002) - U.S. Navy SEALs (SEa, Air, Land) operate Desert Patrol Vehicles (DPV) while preparing for an upcoming mission. Each Dune Buggy" is outfitted with complex communication and weapon systems designed for the harsh desert terrain. Special Operations units are characterized by the use of small units with unique ability to conduct military actions that are beyond the capability of conventional military forces. SEALs are superbly trained in all environments, and are the masters of maritime Special Operations. SEALs are required to utilize a combination of specialized training, equipment, and tactics in completion of Special Operation missions worldwide. Navy SEALs are currently forward deployed in support of Operation Enduring Freedom (OEF). U.S. Navy photo by Photographer's Mate 1st Class Arlo Abrahamson. (RELEASED)

Friday, May 17, 2013

Ever Get The Feeling You’ve Been Cheated?

The famous John Lydon quote strikes me as an appropriate title for a blog post on the state of digital forensics academic programs in the United States. I have been a hiring manager for high tech investigations teams since about 2007 and was involved in assessing candidates for the teams that I was before I became a leader. During the early years, it was rare to see applicants who had degrees in digital forensics, but I’m finding it increasingly common in recent years. One of the things that I have been struck by is how poorly most of these programs are doing in preparing students to enter the digital forensics fields.

It’s not just undergraduate programs that are failing to produce good candidates. I have encountered legions of people with masters degrees in digital forensics who are “unfit for purpose” for entry level positions much less for positions that require a senior skill level. The problem almost always isn’t with the students. They tend to be bright and eager people who just aren’t being served all that well. One of the core issues that I see with the programs that aren’t turning out prepared students are the people who are teaching them. It’s almost universal that programs who have professors who do not have a digital forensics background are turning out students who don’t understand digital forensics. This seems like an obvious and intuitive statement, but given how many digital forensics programs there are who are being lead and taught by unqualified people, it apparently isn’t obvious enough.

If you want to learn to be a good digital forensics examiner, you have to be taught be people who are good digital forensics examiners. If you are interested in learning digital forensics from an academic program, it is your responsibility to look beyond the promotional material and be an informed and educated consumer of your education. The last thing you want is a massive student loan and a degree that looks good on a resume, but then falls apart during a technical interview for that great entry level job that you had your heart set on. One of the best ways to make sure you don’t get burned is to carefully study the backgrounds of the professors who will actually be teaching your classes. We’re a bit too early in the development of the digital forensics field to see a host of full tenured professors with PhD’s in Digital Forensics, but that doesn’t mean you can’t screen out professors who have no earthly clue what they are teaching. Pay very close attention to the curriculum vitae of the people who are going to be teaching your classes. Does the CV show any actual interest in the field of digital forensics? I have seen many CV’s for people teaching digital forensics who don’t show any research or training in the digital forensics field. What it looks like is that we have quite a few institutions that have decided that the digital forensics field is hot right now and to capitalize on it, they press unqualified professors into teaching digital forensics classes just so they can lure paying students (and their tuition money) into their programs. Avoid these programs. Your future depends on it.

We are in a time where there are many fine academic programs available to aspiring digital forensics people who wish to learn digital forensics and launch successful careers. Unfortunately, there are more bad programs than good ones. It’s vital if you are going to spend the time and money getting an education that you don’t get cheated. It’s your life and your responsibility to look beyond the glossy promotional material and make sure you are trusting the right people to get you where you want to go.

Sunday, February 24, 2013

Microsoft Windows File System Tunneling

I’m way overdue on doing a proper blog post so I thought I’d swing back into action by showing everyone some really esoteric Microsoft Windows knowledge that I picked up by accident several years ago working on a research project. Around 2010 or so, I was working on Adobe Flash cookie research with Kristinn Gudjonsson of log2timeline fame. During that research, I discovered an odd occurrence dealing with Windows date and time stamps which led me into learning about file system tunneling.

So for purposes of this blog post, you don’t need to know anything about Adobe Flash cookies. I presented on them during the CEIC 2010 Conference and end of my presentation I went into the file system tunneling aspect of what I had found. This blog post covers that portion of the presentation. The only thing you need to know for purposes of this blog post is that Adobe Flash cookies, at least back when I as doing the research on them many years ago, can be deleted and then quickly replaced with a file of the exact same name and file location as the previous one.

For purposes of this demonstration, we’re using a Adobe Flash cookie file with the name “settings.sol”. I think I was using EnCase Version 6 for making this presentation in case anyone is curious about the tool being used.

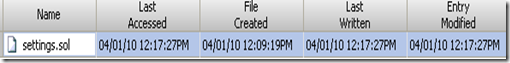

Here is our baseline “settings.sol” file before any changes are made to it.

Here is what happens when a change has been made and the original file has been deleted and replaced with a file of the exact same file name and location. I don’t have the path listed in these screenshots, but the paths of the new files of the same name are being placed in the exact same location as the old files of the same name.

Here is the brand new file with the exact same name in the exact same location:

So we are seeing what we would expect for a brand new file that was created after the old one was deleted…except look at the file creation timestamp. It exactly matches the file created timestamp of the old file that was just deleted. That’s not accurate, is it? We know the file was actually created on 04/01/10 12:17:27PM, but it’s showing the created file time of the deleted file.

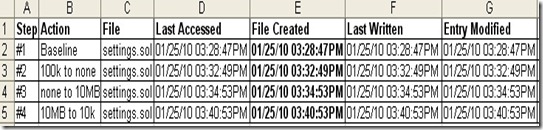

Here is another way of looking at it.

Here we see the old “settings.sol” file that was deleted and we also see the new “settings.sol” file that was created in the exact same location. As you can see, the new file has last accessed, last written, and entry modified time stamps that show when it was created, but it has the wrong file created time. It kept the file creation time of the old file of the exact same name that was in the exact same location.

What on earth is going on?

This almost made me go completely insane trying to figure this out. Like any good digital forensics person, I could absolutely not let this one go and had to run it down until I had an answer. I lost track of how many digital forensics gurus that I contacted to get help. At first, I was a little reluctant to do so in case it turned out to be something obvious that I missed. Who wants to humiliate themselves with a flagrant display of ignorance in front of some digital forensics icon? I was able to save face because the responses I received were all along the lines of telling me that I had an interesting problem on my hands and they didn’t have an answer for me. The person who solved the problem in the end was Eoghan Casey. Eoghan listened to me describe what I was seeing and said he thought I might be observing file system tunneling. I did some further research and it turned out that Eoghan was exactly right.

You can find the official Microsoft write up on file system tunneling at their knowledge base 172190 article at http://support.microsoft.com/kb/172190 The relevant text (and the spelling errors are theirs) from that article is:

The Microsoft Windowsproducts listed at the beginning of this artice contain file system tunneling capabilities to enable compatibility with programs that rely on file systems being able to hold onto file meta-info for a short period of time.

When a name is removed from a directory (rename or delete), its short/long name pair and creation time are saved in a cache, keyed by the name that was removed. When a name is added to a directory (rename or create), the cache is searched to see if there is information to restore. The cache is effective per instance of a directory. If a directory is deleted, the cache for it is removed.

I haven’t performed any recent research to update this information, but at the time I was doing this research in 2010, file tunneling impacted a broad range of Microsoft operating systems including 2k, XP (including 64bit), and NT. Microsoft hasn’t updated the article since 2007, but I’d be surprised if it wasn’t an issue for Windows 7 and potentially Windows 8.

The Microsoft 172190 article goes on to explain that file system tunneling is an issue for both FAT and NTFS file systems because:

The idea is to mimic the behavior MS-DOS programs expect when they use the safe save method. They copy the modified data to a temporary file, delete the original and rename the temporary to the original. This should seem to be the original file when complete. Windows performs tunneling on both FAT and NTFS file systems to ensure long/short file names are retained when 16-bit applications perform this safe save operation.

Microsoft explains how to disable file system tunneling in the same 172190 article. All you need to do is to go to the HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\FileSystem setting in the registry and add a DWORD called MaximumTunnelEntries which you then set to 0.

So the Microsoft article talks about “file systems being able to hold onto file meta-info for a short period of time”. The short period of time is a default setting of fifteen seconds. However, if you wish to change that time, it’s yet another registry tweak. You just have to head over to the same key as you would to disable file system tunneling, but this time you just need to add a new DWORD called “MaximumTunnelEntryAgeInSeconds” and then whatever time you would like. This is all explained in the Microsoft 172190 article.

So like any good forensic geek, I wanted to test what I had found because I didn’t want to look foolish in front of a big audience of people at CEIC. I set up an experiment based on making Flash Cookie modifications that would initiate the same deletion and creation process. Again, all you need to know for purposes of this blog post is that I was making changes that resulted in near instant file deletion and creation of files with the exact same name in the exact same location.

For example, I used a Windows XP SP2 system that had file system tunneling enabled and obtained these results.

I was making changes that causing these “settings.sol” files to be deleted and then replaced with files of the exact same name. Like before, we are seeing the changes that we would expect from new files being created in that their accessed, written, and modified time stamps reflect the accurate time that they were created. However, all of these new files are keeping the same file created stamp as the original file. This is file system tunneling at work.

How do we know for sure? What I did next was to follow the registry modification that I explained before to disable file system tunneling and then to run the experiment again. Again this was a Windows XP SP2 system, but with file system tunneling disabled.

Now we see that all of the file times are matching the time that the files were created including…finally… the file created times.

And because like all good digital forensics people, I’m always suspicious of what I’m seeing, I repeated the experiment a third time, but this time I used the registry to turn file system tunneling back on which resulted in...

…the exact same file system tunneling behavior that I had observed at the start of all of this.